There are all types of rating methods online today. One of the most popular is the 5 star rating method. You’ve seen it everywhere from Yelp, to iTunes, to Amazon, to Netflix.

Unfortunately, 5-star rating methods face many challenges in their use, and numerous studies have identified flaws with this particular methodology.

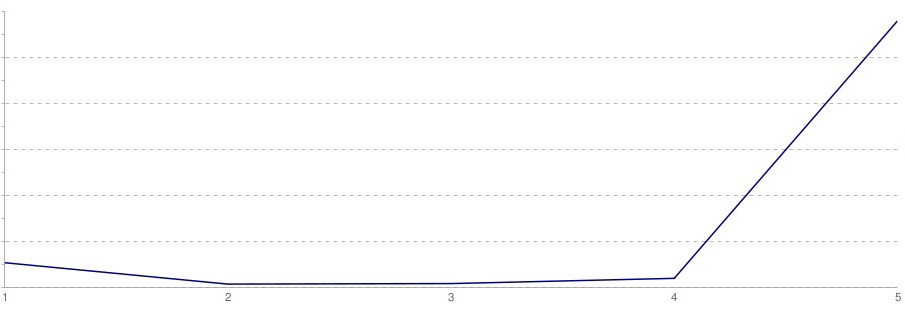

The graph below illustrates the main issue with the 5-star rating scale. Most of the ratings are distributed to the 1 and 5-star ratings. Most people will only comment if they really like or don’t like something. The additional options of 2, 3, and 4 stars are unnecessary.

After learning this, YouTube moved to a thumbs up / thumbs down rating system. However, taking all of the options out might be a bit much. Having a third, middle (the ‘meh’ preference) option in there is good to balance things out.

[geoip_detect2_show_if country=”af”][insert page=’best-Afghanistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ax”][insert page=’best-Aland-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”al”][insert page=’best-Albania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dz”][insert page=’best-Algeria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”as”][insert page=’best-American-Samoa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ad”][insert page=’best-Andorra-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ao”][insert page=’best-Angola-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ai”][insert page=’best-Anguilla-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”aq”][insert page=’best-Antarctica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ag”][insert page=’best-Antigua-and-Barbuda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ar”][insert page=’best-Argentina-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”am”][insert page=’best-Armenia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”aw”][insert page=’best-Aruba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”au”][insert page=’best-Australia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”at”][insert page=’best-Austria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”az”][insert page=’best-Azerbaijan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bs”][insert page=’best-Bahamas-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bh”][insert page=’best-Bahrain-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bd”][insert page=’best-Bangladesh-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bb”][insert page=’best-Barbados-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”by”][insert page=’best-Belarus-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”be”][insert page=’best-Belgium-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bz”][insert page=’best-Belize-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bj”][insert page=’best-Benin-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bm”][insert page=’best-Bermuda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bt”][insert page=’best-Bhutan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bo”][insert page=’best-Bolivia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bq”][insert page=’best-Bonaire-Saint-Eustatius-and-Saba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ba”][insert page=’best-Bosnia-and-Herzegovina-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bw”][insert page=’best-Botswana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bv”][insert page=’best-Bouvet-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”br”][insert page=’best-Brazil-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”io”][insert page=’best-British-Indian-Ocean-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bn”][insert page=’best-Brunei-Darussalam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bg”][insert page=’best-Bulgaria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bf”][insert page=’best-Burkina-Faso-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bi”][insert page=’best-Burundi-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cv”][insert page=’best-Cape-Verde-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kh”][insert page=’best-Cambodia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cm”][insert page=’best-Cameroon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ca”][insert page=’best-Canada-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ky”][insert page=’best-Cayman-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cf”][insert page=’best-Central-African-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”td”][insert page=’best-Chad-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cl”][insert page=’best-Chile-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cn”][insert page=’best-China-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cx”][insert page=’best-Christmas-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cc”][insert page=’best-Cocos-Keeling-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”co”][insert page=’best-Colombia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”km”][insert page=’best-Comoros-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cg”][insert page=’best-Republic-of-Congo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cd”][insert page=’best-Democratic-republic-of-congo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ck”][insert page=’best-Cook-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cr”][insert page=’best-Costa-Rica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ci”][insert page=’best-Cote-dIvoire-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hr”][insert page=’best-Croatia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cu”][insert page=’best-Cuba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cw”][insert page=’best-Curacao-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cy”][insert page=’best-Cyprus-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cz”][insert page=’best-Czech-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dk”][insert page=’best-Denmark-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dj”][insert page=’best-Djibouti-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dm”][insert page=’best-Dominica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”do”][insert page=’best-Dominican-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ec”][insert page=’best-Ecuador-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”eg”][insert page=’best-Egypt-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sv”][insert page=’best-El-Salvador-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gq”][insert page=’best-Equatorial-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”er”][insert page=’best-Eritrea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ee”][insert page=’best-Estonia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”et”][insert page=’best-Ethiopia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fk”][insert page=’best-Falkland-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fo”][insert page=’best-Faroe-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fj”][insert page=’best-Fiji-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fi”][insert page=’best-Finland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fr”][insert page=’best-France-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gf”][insert page=’best-French-Guyana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pf”][insert page=’best-French-Polynesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tf”][insert page=’best-French-Southern-Terr-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ga”][insert page=’best-Gabon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gm”][insert page=’best-Gambia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ge”][insert page=’best-Georgia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”de”][insert page=’best-Germany-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gh”][insert page=’best-Ghana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gi”][insert page=’best-Gibraltar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gr”][insert page=’best-Greece-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gl”][insert page=’best-Greenland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gd”][insert page=’best-Grenada-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gp”][insert page=’best-Guadeloupe-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gu”][insert page=’best-Guam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gt”][insert page=’best-Guatemala-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gg”][insert page=’best-Guernsey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gn”][insert page=’best-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gw”][insert page=’best-Guinea-Bissau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gy”][insert page=’best-Guyana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ht”][insert page=’best-Haiti-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hm”][insert page=’best-Heard-and-Mc-Donald-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”va”][insert page=’best-Holy-See-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hn”][insert page=’best-Honduras-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hk”][insert page=’best-Hong-Kong-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hu”][insert page=’best-Hungary-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”is”][insert page=’best-Iceland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”in”][insert page=’best-India-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”id”][insert page=’best-Indonesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ir”][insert page=’best-Iran-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”iq”][insert page=’best-Iraq-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ie”][insert page=’best-Ireland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”im”][insert page=’best-Isle-Of-Man-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”il”][insert page=’best-Israel-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”it”][insert page=’best-Italy-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jm”][insert page=’best-Jamaica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jp”][insert page=’best-Japan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”je”][insert page=’best-Jersey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jo”][insert page=’best-Jordan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kz”][insert page=’best-Kazakhstan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ke”][insert page=’best-Kenya-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ki”][insert page=’best-Kiribati-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kp”][insert page=’best-North-Korea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kr”][insert page=’best-South-Korea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kw”][insert page=’best-Kuwait-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kg”][insert page=’best-Kyrgyzstan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”la”][insert page=’best-Laos-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lv”][insert page=’best-Latvia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lb”][insert page=’best-Lebanon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ls”][insert page=’best-Lesotho-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lr”][insert page=’best-Liberia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ly”][insert page=’best-Libya-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”li”][insert page=’best-Liechtenstein-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lt”][insert page=’best-Lithuania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lu”][insert page=’best-Luxembourg-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mo”][insert page=’best-Macau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mg”][insert page=’best-Madagascar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mw”][insert page=’best-Malawi-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”my”][insert page=’best-Malaysia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mv”][insert page=’best-Maldives-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ml”][insert page=’best-Mali-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mt”][insert page=’best-Malta-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mh”][insert page=’best-Marshall-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mq”][insert page=’best-Martinique-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mr”][insert page=’best-Mauritania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mu”][insert page=’best-Mauritius-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”yt”][insert page=’best-Mayotte-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mx”][insert page=’best-Mexico-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fm”][insert page=’best-Micronesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”md”][insert page=’best-Moldova-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mc”][insert page=’best-Monaco-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mn”][insert page=’best-Mongolia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”me”][insert page=’best-Montenegro-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ms”][insert page=’best-Montserrat-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ma”][insert page=’best-Morocco-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mz”][insert page=’best-Mozambique-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mm”][insert page=’best-Myanmar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”na”][insert page=’best-Namibia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nr”][insert page=’best-Nauru-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”np”][insert page=’best-Nepal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nl”][insert page=’best-Netherlands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nc”][insert page=’best-New-Caledonia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nz”][insert page=’best-New-Zealand-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ni”][insert page=’best-Nicaragua-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ne”][insert page=’best-Niger-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ng”][insert page=’best-Nigeria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nu”][insert page=’best-Niue-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nf”][insert page=’best-Norfolk-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mp”][insert page=’best-Northern-Mariana-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”no”][insert page=’best-Norway-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”om”][insert page=’best-Oman-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pk”][insert page=’best-Pakistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pw”][insert page=’best-Palau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ps”][insert page=’best-Palestinian-Territory-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pa”][insert page=’best-Panama-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pg”][insert page=’best-Papua-New-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”py”][insert page=’best-Paraguay-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pe”][insert page=’best-Peru-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ph”][insert page=’best-Philippines-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pn”][insert page=’best-Pitcairn-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pl”][insert page=’best-Poland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pt”][insert page=’best-Portugal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pr”][insert page=’best-Puerto-Rico-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”qa”][insert page=’best-Qatar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”re”][insert page=’best-Reunion-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ro”][insert page=’best-Romania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ru”][insert page=’best-Russia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”rw”][insert page=’best-Rwanda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bl”][insert page=’best-Saint-Bartelemy-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sh”][insert page=’best-Saint-Helena-Ascension-and-Tristan-da-Cunha-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kn”][insert page=’best-Saint-Kitts-and-Nevis-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lc”][insert page=’best-Saint-Lucia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mf”][insert page=’best-Saint-Martin-French-part-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pm”][insert page=’best-Saint-Pierre-and-Miquelon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vc”][insert page=’best-Saint-Vincent-and-The-Grenadin-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ws”][insert page=’best-Samoa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sm”][insert page=’best-San-Marino-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”st”][insert page=’best-Sao-Tome-and-Principe-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sa”][insert page=’best-Saudi-Arabia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sn”][insert page=’best-Senegal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”rs”][insert page=’best-Serbia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sc”][insert page=’best-Seychelles-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sl”][insert page=’best-Sierra-Leone-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sg”][insert page=’best-Singapore-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sx”][insert page=’best-Sint-Maarten-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sk”][insert page=’best-Slovakia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”si”][insert page=’best-Slovenia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sb”][insert page=’best-Solomon-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”so”][insert page=’best-Somalia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”za”][insert page=’best-South-Africa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gs”][insert page=’best-South-Georgia-and-the-South-Sandwich-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ss”][insert page=’best-South-Sudan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”es”][insert page=’best-Spain-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lk”][insert page=’best-Sri-Lanka-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sd”][insert page=’best-Sudan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sr”][insert page=’best-Suriname-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sj”][insert page=’best-Svalbard-and-Jan-Mayen-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”se”][insert page=’best-Sweden-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ch”][insert page=’best-Switzerland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sy”][insert page=’best-Syria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tw”][insert page=’best-Taiwan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tj”][insert page=’best-Tajikistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tz”][insert page=’best-Tanzania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”th”][insert page=’best-Thailand-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tg”][insert page=’best-Togo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tk”][insert page=’best-Tokelau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”to”][insert page=’best-Tonga-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tt”][insert page=’best-Trinidad-and-Tobago-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tn”][insert page=’best-Tunisia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tr”][insert page=’best-Turkey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tm”][insert page=’best-Turkmenistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tc”][insert page=’best-Turks-and-Caicos-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tv”][insert page=’best-Tuvalu-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ug”][insert page=’best-Uganda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ua”][insert page=’best-Ukraine-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ae”][insert page=’best-United-Arab-Emirates-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gb”][insert page=’best-UK-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”us”][insert page=’best-US-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”um”][insert page=’best-United-States-Minor-Outlying-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”uy”][insert page=’best-Uruguay-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”uz”][insert page=’best-Uzbekistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vu”][insert page=’best-Vanuatu-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ve”][insert page=’best-Venezuela-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vn”][insert page=’best-Vietnam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vg”][insert page=’best-british-Virgin-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vi”][insert page=’best-us-Virgin-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”wf”][insert page=’best-Wallis-and-Futuna-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”eh”][insert page=’best-Western-Sahara-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ye”][insert page=’best-Yemen-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”zm”][insert page=’best-Zambia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”zw”][insert page=’best-Zimbabwe-online-casinos’ display=’content’][/geoip_detect2_show_if]

[elementor-template id=”6665″]

Problem 1:

The higher the average, the higher the item is listed in the scale.

For example:

Product A has 10 ratings, 9 of which are 5 stars and 1 is 1 star. Average rating of 4.6 stars.

Product B has 100 ratings, 85 of which are 5 stars and 15 of which are 1 star. Average rating of 4.4 stars.

Product A will be listed above Product B because it has a higher average. However, Product A has significantly fewer ratings and doesn’t necessarily deserve to be ranked above Product B.

Problem 2:

Averages can be misleading and distract you from important details within specific ratings. Sometimes, just 1 review matters. We can’t find a better way to illustrate this point than with this comic from XKCD. This is a comic pretending to display ratings for a ‘Tornado Guard’ app that will alert you when tornados are near. The app has an average rating of 4 stars, but really, it’s only the last review that really matters.

If you were a quarterback and threw the ball one foot too far ahead of your receiver half the time, and one foot too far behind your receiver the other half of the time, you wouldn’t see a lot of playing time. But if we were measuring the accuracy of your throwing, we might conclude that, on average, you were extremely accurate. And that is the basic problem with averages: they can hide what you need to know.

Averages hide variation

Averages are simple to calculate and are sometimes a lazy way of determining past performance. For example, over some period of time, a performance level may have started at 50% and ended up at 70%. Simple math then determines the average to be 60%, so 60% is now used as a baseline to measure future performance against.

If the next performance level measured is 65%, you might conclude that performance has improved, which it has over the baseline. But in fact, it has degraded from the true starting point of 70%. Your next decisions could lead you in the wrong direction.

Averages are easy to manipulate. The simplest way to manipulate averages is to change the base period or exclude the “outliers,” which are those data points that seem to be abnormally high or low when compared with the majority. One of our clients once told us that the key to understanding what drives performance is not to exclude outliers but to study them.

By looking at the best and worst months over the last year, he learned what most impacted his results. Another outlier study we learned about (from the automotive industry) is a concept called BOB WOW, which stands for best-of-the-best and worst-of-the-worst. This one we find effective for looking at variances in individual performance. Studying the outliers gives insight into what helps or hinders success.

In one retail project we did for a shoe manufacturer we observed the company’s most and least successful salespeople. We found the simple act of getting people to try on shoes had a marked impact on their likelihood to purchase. The sales reps couldn’t specifically articulate it, but their greeting, questions, and even movement were designed to get you to invest time and energy in putting on shoes. Over time they intuitively knew that one action led to better odds of making a sale.

Consider the case of the statistician who drowns while fording a river that he calculates is, on average, three feet deep. If he were alive to tell the tale, he would expound on the “flaw of averages,” which states, simply, that plans based on assumptions about average conditions usually go wrong. This basic but almost always unseen flaw shows up everywhere in business, distorting accounts, undermining forecasts, and dooming apparently well-considered projects to disappointing results.

Let’s say that a company I’ll call HealthCeuticals sells a perishable antibiotic. Although demand for the drug varies, for years the average monthly demand has been 5,000 units, so that’s the quantity the company currently stocks. One day, the boss appears. “Give me a forecast of demand for next year,” he says to his product manager. “I need it to estimate inventory cost for the budget.” The product manager responds, “Demand varies from month to month. Here, let me give you a distribution.” But the boss doesn’t want a “distribution.” “Give me a number!” he insists. “Well,” the manager says meekly, “the average demand is 5,000 units a month. So, if you need a single number, go with 5,000.”

The boss now proceeds to estimate inventory costs, which are calculated as follows: If monthly demand is less than the amount stocked, the firm incurs a spoilage cost of $50 per unsold unit. On the other hand, if the demand is greater than the amount stocked, the firm must air-freight the extra units at an increased cost of $150 each. These are the only two costs that depend on the accuracy of the forecast. The boss has developed a spreadsheet model to calculate the costs associated with any given demand and amount stocked. Since the average demand is 5,000 units, he plugs in 5,000. Since the company always stocks 5,000 units, the spreadsheet dutifully reports that for this average demand, the cost is zero: no spoilage or airfreight costs.

A bottom line based on average assumptions should be the average bottom line, right? It may miss fluctuations from month to month, but shouldn’t you at least get the correct average cost by plugging in average demand? No. It’s easy to see that the average cost can’t be zero by noting that when demand for HealthCeuticals’ antibiotic deviates either up or down from the average, the company incurs costs.

Show Me the Number

Executives’ desire to work with “a number,” to plug in an average figure, is legendary. But whenever an average is used to represent an uncertain quantity, it ends up distorting the results because it ignores the impact of the inevitable variations. Averages routinely gum up accounting, investments, sales, production planning, even weather forecasting. Even the Generally Accepted Accounting Principles sanction the “flaw,” requiring that uncertainties such as bad debt be entered a s single numbers. (To its credit, the SEC has proposed new rules that would begin to address this problem.)

In one celebrated, real-life case, relying on averages forced Orange County, California, into insolvency. In the summer of 1994, interest rates were low and were expected to remain so. Officials painted a rosy picture of the county’s financial portfolio based on this expected future behavior of interest rates. But had they explicitly considered the well-documented range of interest-rate uncertainties, instead of a single, average interest-rate scenario, they would have seen that there was a 5% chance of losing $1 billion or more—which is exactly what happened. The average hid the enormous riskiness of their investments.

More recently, a failure to appreciate the flaw led to $2 billion in property damage in North Dakota. In 1997, the U.S. Weather Service forecast that North Dakota’s rising Red River would crest at 49 feet.

Officials in Grand Forks made flood management plans based on this single figure, which represented an average. In fact, the river crested above 50 feet, breaching the dikes, and unleashing a flood that forced 50,000 people from their homes.

Fixing the Flaw

Some executives are already attuned to the importance of acting on a range of relevant numbers—a distribution—rather than single values, and they employ statisticians who estimate the distributions of everything from stock prices to electricity demand. Increasingly, companies are also turning to software-based cures for the flaw.

Many programs now simulate uncertainty, generating thousands of possible values for a given scenario—in essence, replacing the low-resolution “snapshot” of a single average number with a detailed “movie.” The movie comprises a whole range of possible values and their likelihood of occurring—the frequency distribution.

The simplest and most popular tool, called the Monte Carlo simulation, was described by David Hertz in a 1964 HBR article and popularized in financial circles by sophisticated users like Merck CFO and executive vice president Judy Lewent. Today, spreadsheet-based Monte Carlo simulation software is widely available and is being used in fields as diverse as petroleum exploration, financial engineering, defense, banking, and retirement portfolio planning.

Wells Fargo Bank, for instance, used a Monte Carlo simulation to predict the cost of offering customers a variable-rate CD, whose return would increase if interest rates rose. A previous estimate based on three years of 1990s interest-rate data had shown that the cost would be about 0.10% for a five-year CD.

But the Monte Carlo simulation, which combined interest-rate data going back to 1965 with models of customer behavior, found that the bank’s cost could be eight times that amount. The alarming finding induced the bank to reconfigure its CD product to reduce the chance of unacceptable costs should interest rates rise.

Had the average-obsessed boss at HealthCeuticals used Monte Carlo simulation, he would have seen not only that the average inventory cost was not zero but that he shouldn’t have been stocking 5,000 units in the first place. For executives like him who are still fond of single values, it’s time for a shift in mindset. Rather than “Give me a number for my report,” what every executive should be saying is “Give me a distribution for my simulation.”

[geoip_detect2_show_if country=”af”][insert page=’best-Afghanistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ax”][insert page=’best-Aland-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”al”][insert page=’best-Albania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dz”][insert page=’best-Algeria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”as”][insert page=’best-American-Samoa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ad”][insert page=’best-Andorra-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ao”][insert page=’best-Angola-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ai”][insert page=’best-Anguilla-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”aq”][insert page=’best-Antarctica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ag”][insert page=’best-Antigua-and-Barbuda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ar”][insert page=’best-Argentina-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”am”][insert page=’best-Armenia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”aw”][insert page=’best-Aruba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”au”][insert page=’best-Australia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”at”][insert page=’best-Austria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”az”][insert page=’best-Azerbaijan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bs”][insert page=’best-Bahamas-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bh”][insert page=’best-Bahrain-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bd”][insert page=’best-Bangladesh-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bb”][insert page=’best-Barbados-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”by”][insert page=’best-Belarus-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”be”][insert page=’best-Belgium-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bz”][insert page=’best-Belize-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bj”][insert page=’best-Benin-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bm”][insert page=’best-Bermuda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bt”][insert page=’best-Bhutan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bo”][insert page=’best-Bolivia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bq”][insert page=’best-Bonaire-Saint-Eustatius-and-Saba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ba”][insert page=’best-Bosnia-and-Herzegovina-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bw”][insert page=’best-Botswana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bv”][insert page=’best-Bouvet-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”br”][insert page=’best-Brazil-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”io”][insert page=’best-British-Indian-Ocean-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bn”][insert page=’best-Brunei-Darussalam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bg”][insert page=’best-Bulgaria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bf”][insert page=’best-Burkina-Faso-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bi”][insert page=’best-Burundi-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cv”][insert page=’best-Cape-Verde-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kh”][insert page=’best-Cambodia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cm”][insert page=’best-Cameroon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ca”][insert page=’best-Canada-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ky”][insert page=’best-Cayman-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cf”][insert page=’best-Central-African-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”td”][insert page=’best-Chad-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cl”][insert page=’best-Chile-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cn”][insert page=’best-China-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cx”][insert page=’best-Christmas-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cc”][insert page=’best-Cocos-Keeling-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”co”][insert page=’best-Colombia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”km”][insert page=’best-Comoros-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cg”][insert page=’best-Republic-of-Congo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cd”][insert page=’best-Democratic-republic-of-congo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ck”][insert page=’best-Cook-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cr”][insert page=’best-Costa-Rica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ci”][insert page=’best-Cote-dIvoire-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hr”][insert page=’best-Croatia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cu”][insert page=’best-Cuba-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cw”][insert page=’best-Curacao-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cy”][insert page=’best-Cyprus-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”cz”][insert page=’best-Czech-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dk”][insert page=’best-Denmark-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dj”][insert page=’best-Djibouti-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”dm”][insert page=’best-Dominica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”do”][insert page=’best-Dominican-Republic-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ec”][insert page=’best-Ecuador-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”eg”][insert page=’best-Egypt-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sv”][insert page=’best-El-Salvador-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gq”][insert page=’best-Equatorial-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”er”][insert page=’best-Eritrea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ee”][insert page=’best-Estonia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”et”][insert page=’best-Ethiopia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fk”][insert page=’best-Falkland-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fo”][insert page=’best-Faroe-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fj”][insert page=’best-Fiji-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fi”][insert page=’best-Finland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fr”][insert page=’best-France-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gf”][insert page=’best-French-Guyana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pf”][insert page=’best-French-Polynesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tf”][insert page=’best-French-Southern-Terr-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ga”][insert page=’best-Gabon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gm”][insert page=’best-Gambia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ge”][insert page=’best-Georgia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”de”][insert page=’best-Germany-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gh”][insert page=’best-Ghana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gi”][insert page=’best-Gibraltar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gr”][insert page=’best-Greece-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gl”][insert page=’best-Greenland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gd”][insert page=’best-Grenada-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gp”][insert page=’best-Guadeloupe-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gu”][insert page=’best-Guam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gt”][insert page=’best-Guatemala-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gg”][insert page=’best-Guernsey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gn”][insert page=’best-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gw”][insert page=’best-Guinea-Bissau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gy”][insert page=’best-Guyana-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ht”][insert page=’best-Haiti-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hm”][insert page=’best-Heard-and-Mc-Donald-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”va”][insert page=’best-Holy-See-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hn”][insert page=’best-Honduras-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hk”][insert page=’best-Hong-Kong-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”hu”][insert page=’best-Hungary-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”is”][insert page=’best-Iceland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”in”][insert page=’best-India-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”id”][insert page=’best-Indonesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ir”][insert page=’best-Iran-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”iq”][insert page=’best-Iraq-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ie”][insert page=’best-Ireland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”im”][insert page=’best-Isle-Of-Man-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”il”][insert page=’best-Israel-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”it”][insert page=’best-Italy-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jm”][insert page=’best-Jamaica-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jp”][insert page=’best-Japan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”je”][insert page=’best-Jersey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”jo”][insert page=’best-Jordan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kz”][insert page=’best-Kazakhstan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ke”][insert page=’best-Kenya-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ki”][insert page=’best-Kiribati-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kp”][insert page=’best-North-Korea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kr”][insert page=’best-South-Korea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kw”][insert page=’best-Kuwait-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kg”][insert page=’best-Kyrgyzstan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”la”][insert page=’best-Laos-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lv”][insert page=’best-Latvia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lb”][insert page=’best-Lebanon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ls”][insert page=’best-Lesotho-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lr”][insert page=’best-Liberia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ly”][insert page=’best-Libya-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”li”][insert page=’best-Liechtenstein-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lt”][insert page=’best-Lithuania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lu”][insert page=’best-Luxembourg-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mo”][insert page=’best-Macau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mg”][insert page=’best-Madagascar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mw”][insert page=’best-Malawi-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”my”][insert page=’best-Malaysia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mv”][insert page=’best-Maldives-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ml”][insert page=’best-Mali-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mt”][insert page=’best-Malta-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mh”][insert page=’best-Marshall-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mq”][insert page=’best-Martinique-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mr”][insert page=’best-Mauritania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mu”][insert page=’best-Mauritius-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”yt”][insert page=’best-Mayotte-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mx”][insert page=’best-Mexico-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”fm”][insert page=’best-Micronesia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”md”][insert page=’best-Moldova-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mc”][insert page=’best-Monaco-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mn”][insert page=’best-Mongolia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”me”][insert page=’best-Montenegro-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ms”][insert page=’best-Montserrat-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ma”][insert page=’best-Morocco-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mz”][insert page=’best-Mozambique-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mm”][insert page=’best-Myanmar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”na”][insert page=’best-Namibia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nr”][insert page=’best-Nauru-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”np”][insert page=’best-Nepal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nl”][insert page=’best-Netherlands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nc”][insert page=’best-New-Caledonia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nz”][insert page=’best-New-Zealand-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ni”][insert page=’best-Nicaragua-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ne”][insert page=’best-Niger-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ng”][insert page=’best-Nigeria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nu”][insert page=’best-Niue-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”nf”][insert page=’best-Norfolk-Island-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mp”][insert page=’best-Northern-Mariana-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”no”][insert page=’best-Norway-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”om”][insert page=’best-Oman-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pk”][insert page=’best-Pakistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pw”][insert page=’best-Palau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ps”][insert page=’best-Palestinian-Territory-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pa”][insert page=’best-Panama-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pg”][insert page=’best-Papua-New-Guinea-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”py”][insert page=’best-Paraguay-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pe”][insert page=’best-Peru-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ph”][insert page=’best-Philippines-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pn”][insert page=’best-Pitcairn-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pl”][insert page=’best-Poland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pt”][insert page=’best-Portugal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pr”][insert page=’best-Puerto-Rico-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”qa”][insert page=’best-Qatar-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”re”][insert page=’best-Reunion-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ro”][insert page=’best-Romania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ru”][insert page=’best-Russia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”rw”][insert page=’best-Rwanda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”bl”][insert page=’best-Saint-Bartelemy-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sh”][insert page=’best-Saint-Helena-Ascension-and-Tristan-da-Cunha-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”kn”][insert page=’best-Saint-Kitts-and-Nevis-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lc”][insert page=’best-Saint-Lucia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”mf”][insert page=’best-Saint-Martin-French-part-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”pm”][insert page=’best-Saint-Pierre-and-Miquelon-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vc”][insert page=’best-Saint-Vincent-and-The-Grenadin-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ws”][insert page=’best-Samoa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sm”][insert page=’best-San-Marino-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”st”][insert page=’best-Sao-Tome-and-Principe-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sa”][insert page=’best-Saudi-Arabia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sn”][insert page=’best-Senegal-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”rs”][insert page=’best-Serbia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sc”][insert page=’best-Seychelles-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sl”][insert page=’best-Sierra-Leone-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sg”][insert page=’best-Singapore-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sx”][insert page=’best-Sint-Maarten-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sk”][insert page=’best-Slovakia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”si”][insert page=’best-Slovenia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sb”][insert page=’best-Solomon-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”so”][insert page=’best-Somalia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”za”][insert page=’best-South-Africa-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gs”][insert page=’best-South-Georgia-and-the-South-Sandwich-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ss”][insert page=’best-South-Sudan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”es”][insert page=’best-Spain-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”lk”][insert page=’best-Sri-Lanka-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sd”][insert page=’best-Sudan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sr”][insert page=’best-Suriname-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sj”][insert page=’best-Svalbard-and-Jan-Mayen-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”se”][insert page=’best-Sweden-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ch”][insert page=’best-Switzerland-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”sy”][insert page=’best-Syria-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tw”][insert page=’best-Taiwan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tj”][insert page=’best-Tajikistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tz”][insert page=’best-Tanzania-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”th”][insert page=’best-Thailand-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tg”][insert page=’best-Togo-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tk”][insert page=’best-Tokelau-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”to”][insert page=’best-Tonga-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tt”][insert page=’best-Trinidad-and-Tobago-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tn”][insert page=’best-Tunisia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tr”][insert page=’best-Turkey-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tm”][insert page=’best-Turkmenistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tc”][insert page=’best-Turks-and-Caicos-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”tv”][insert page=’best-Tuvalu-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ug”][insert page=’best-Uganda-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ua”][insert page=’best-Ukraine-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ae”][insert page=’best-United-Arab-Emirates-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”gb”][insert page=’best-UK-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”us”][insert page=’best-US-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”um”][insert page=’best-United-States-Minor-Outlying-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”uy”][insert page=’best-Uruguay-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”uz”][insert page=’best-Uzbekistan-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vu”][insert page=’best-Vanuatu-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ve”][insert page=’best-Venezuela-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vn”][insert page=’best-Vietnam-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vg”][insert page=’best-british-Virgin-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”vi”][insert page=’best-us-Virgin-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”wf”][insert page=’best-Wallis-and-Futuna-Islands-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”eh”][insert page=’best-Western-Sahara-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”ye”][insert page=’best-Yemen-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”zm”][insert page=’best-Zambia-online-casinos’ display=’content’][/geoip_detect2_show_if]

[geoip_detect2_show_if country=”zw”][insert page=’best-Zimbabwe-online-casinos’ display=’content’][/geoip_detect2_show_if]